The Challenge

What Discover Was Facing

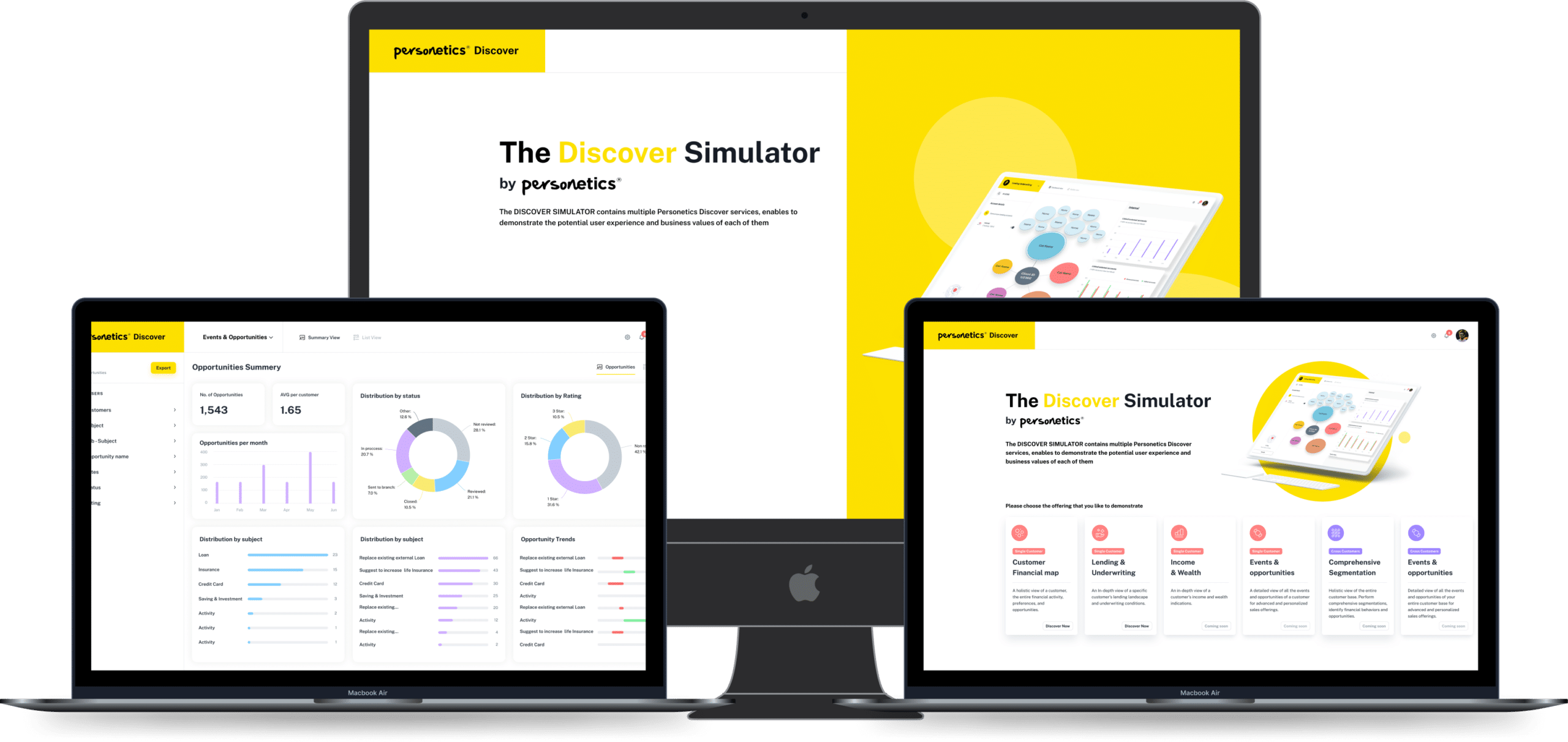

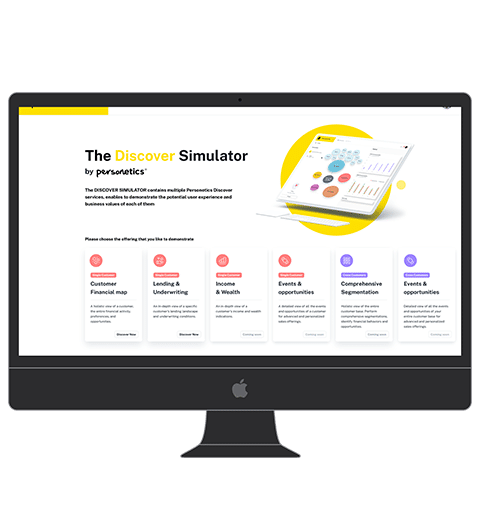

Discover delivers personalised content recommendations and needed a recommendation infrastructure that could serve predictions in under 100ms while continuously incorporating new user behaviour signals. The team had ML models that worked well in offline evaluation but the serving infrastructure — a simple REST endpoint calling the model synchronously — could not meet latency targets at production traffic volumes.

The Solution

What We Built

We designed the recommendation infrastructure around a real-time feature store built on Redis, pre-computing user and item feature vectors on a rolling 30-minute window from a Flink streaming job consuming Kafka user-event topics. The model serving layer used a dedicated inference server (Triton) running quantised models, fed pre-computed features from Redis to eliminate feature computation latency from the hot path. A/B experiment assignments were stored in a low-latency flag service backed by DynamoDB, enabling simultaneous multi-variant testing across different recommendation algorithms. Model updates were deployed via a shadow-traffic validation step before full promotion.

Results